The Sovereignty Stack

AI sovereignty isn't theoretical. You can build it today. Here's what actually works, and what doesn't.

AI sovereignty doesn’t require becoming a cypherpunk. If you want the broader thesis first, start with the case for sovereign AI. You can assemble a working sovereignty stack from production-ready DeAIDeAIDecentralised AI. An umbrella term for blockchain-based projects that build AI infrastructure (compute, data, inference, models, agents) without a single central provider controlling the system.Like the difference between streaming a movie from Netflix and sharing it via BitTorrent. Netflix is fast and polished but one company controls what you can watch and what you pay. BitTorrent is messier but no single operator can shut you out.Read more → infrastructure today. But the choices involve real tradeoffs between cost, maturity, and decentralisation.

What follows is what you actually need to run your own AI infrastructure. Not ideology. Specific protocols, real costs, and honest assessments of what’s ready and what isn’t.

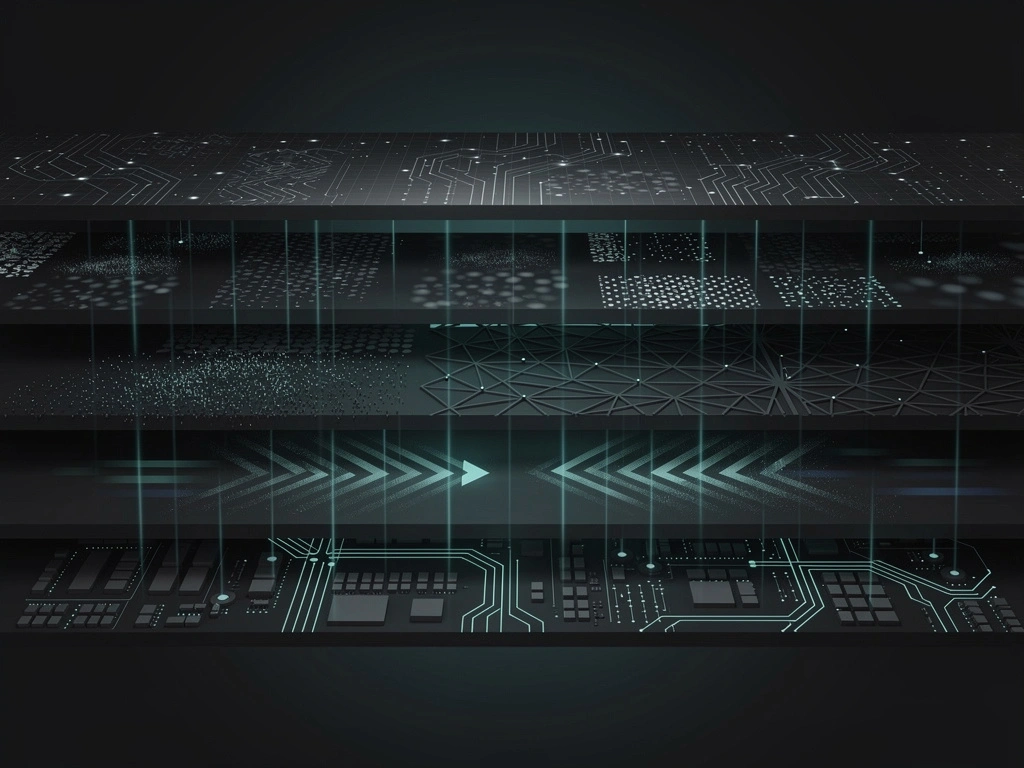

The five-layer model

True AI sovereignty requires control across five interconnected layers. Each layer offers varying degrees of decentralisation, maturity, and tradeoffs. No single protocol solves all layers. Sovereignty requires composition.

The Five-Layer Sovereignty Stack

Each layer is a choice. Each choice has tradeoffs. Some layers are production-ready. Others are experimental. Let’s go through them honestly.

COMPUTE: Where your models run

Sovereign compute means controlling the physical infrastructure processing AI workloads. Without compute sovereignty, every other layer sits on someone else’s foundation.

Akash Network: The most credible option

Akash is the longest-running decentralised cloud marketplace. Mainnet since September 2020. Real revenue: $3.15 million annually, up 128% year-over-year. Messari Akash Report

What works: No KYC for deployments. Reverse auction modelModelA trained neural network that takes inputs (text, images, audio) and produces outputs (more text, classifications, generated content). In DeAI the model is the thing that actually does the work.Like a very experienced apprentice who has spent years watching thousands of masters make furniture. They can't explain how they know when a joint is right, but they can make a chair that looks and functions like a Chippendale. The training is invisible. The output is what matters.Read more → where providers compete on price. Self-custodial. H100s from roughly $1.49 per GPU-hour (Akash Network) versus $6.88 on-demand on AWS p5 instances (AWS Pricing, post-June 2025 reduction). That’s roughly 78% cheaper, though without enforceable SLAs.

What doesn’t: 69 providers is not a cloud marketplace. It’s a pilot programme. Provider count declined through 2025 despite usage growth. No managed services. Chain migration risk as Cosmos deprecation is planned. You’re trading reliability and scale for cost and sovereignty.

Render: Real customers, centralised operation

Render has 15,670 node operators and genuine enterprise customers: Hollywood studios, Apple Vision Pro projects. But it’s permissioned. You need approval to run a node. The core is closed-source. Roughly 50% insider allocation at launch. Render Analysis

What works: Real rendering workloads. High liquidityLiquidityHow easily a token can be bought or sold without moving the price. High liquidity means you can enter or exit large positions quickly at the quoted price. Low liquidity means even small trades can swing the market.Like the difference between selling a house and selling a share of Apple stock. The house might be worth more on paper, but finding a buyer at that price takes weeks. The Apple share converts to cash in one click.Read more →. Customers who pay.

What doesn’t: Freedom Score of 32/100. Permissioned network. Centralised operation. Late to the AI compute pivot, as rendering is still the core business.

io.net: Metrics inflation

io.net markets 327,000 “registered” GPUs. The daily average of verified, active GPUs in Q1 2025 was 6,720. That’s 2% utilisation of the registered base. Freedom Score: 38/100. Closed-source core. No governance. io.net Review

Revenue is growing ($5.7M in Q1 2025, 82.6% quarter-over-quarter growth). But the credibility gap is real. After the 2024 Sybil attack that inflated GPUGPUGraphics Processing Unit. Originally designed to render video game graphics, GPUs turned out to be exceptionally good at the massively parallel math that AI models need. Modern AI training and inference runs almost entirely on GPUs.Like a factory with 10,000 workers doing the same simple task in parallel, versus a CPU which is more like 10 workers each doing different complex tasks. AI training involves doing simple math a million times per second on a million numbers, which is exactly what the GPU factory is designed for.Read more → counts, every metric deserves scrutiny.

Gensyn: Not ready yet

Gensyn is the promising one. Testnet Phase 0. TGETGEToken Generation Event. The moment a project's token first becomes tradeable. TGE is when vesting clocks usually start, when liquidity hits exchanges, and when public price discovery begins.Like the IPO day for a startup. Everything that happened before TGE was private valuations and paper agreements. Everything after is the public market deciding what the thing is worth in real time.Read more → planned for April 2026. 165,000+ testnet users. Projected pricing at $0.40 per V100-equivalent hour. But no mainnet. No revenue. Heavy insider allocation at 54.6%. Gensyn Analysis

Compute sovereignty spectrum

Compute Sovereignty Spectrum

DATA: Where your training data comes from

Data layer sovereignty means controlling both data sources and data destination. Two distinct problems: obtaining trainingTrainingThe one-time process of teaching a neural network to perform a task by showing it massive amounts of example data and adjusting its internal weights until the outputs are good. Training builds the model; inference uses it.Like the years an apprentice spends learning a trade. You don't see any of the actual work, just thousands of repeated mistakes gradually becoming competence. By the end, the apprentice can do the job. The training was invisible, but the skill is now permanent.Read more → data, and protecting inferenceInferenceRunning a trained AI model to produce an answer. Inference is what happens when you type a prompt into ChatGPT and get a response. The model takes your input, computes a best guess, and returns it.Like asking an expert for their opinion. The training was the decades they spent becoming an expert. The inference is the 30 seconds it takes them to answer your specific question.Read more → data.

Grass: Bandwidth, not data sovereignty

Grass has 8.3 million monthly active nodes across 190 countries. 3 petabytes of data retrieved daily. Production-ready at massive scale. Grass Review

But Grass is not a data sovereignty solution. Users contribute bandwidth, not data ownership. The network scrapes the web and sells to enterprise customers (roughly 20 of them). Users don’t own the data. They don’t share in revenue. They bear legal risk as exit nodes. Closed-source. Zero public repositories. Zero governance.

Verdict: Grass is a bandwidth marketplace where users contribute to a data monopoly. Useful for obtaining training data if you’re the enterprise customer. Not sovereignty.

Vana: Actual data sovereignty

Vana is different. Users export personal data (Reddit history, Spotify listening, ChatGPT interactions) and contribute to DataDAOs that pool and validate it. TEE-based verification. Users control keys. Self-hosting option exists. Consent enforced on-chain. Vana Analysis

What works: True data sovereignty via user-controlled keys. TEETEETrusted Execution Environment. A hardware-secured region of a CPU or GPU where code runs in isolation, so even the machine's operator can't read what's happening inside. TEEs give decentralised AI inference privacy guarantees.Like a bank vault inside a bank. The bank owns the building, staffs the lobby, and runs the security cameras. But what's inside the vault is invisible to everyone, including the bank staff, unless the customer opens it.Read more → verification. Self-host option.

What doesn’t: Permissioned validatorValidatorA computer that runs the full blockchain protocol, verifies transactions, and proposes new blocks. Validators are the workers that keep a Proof of Stake network running, and they earn rewards for doing the work correctly.Like a notary public who witnesses and stamps legal documents. Validators witness transactions, check they follow the rules, and stamp them into the permanent record. A notary who commits fraud loses their license. Validators work the same way, except the license is staked tokens that get slashed on misbehaviour.Read more → set. Individual data value is “fractions of a cent.” Requires collective action through DataDAOs. TokenTokenA digital unit of value or access rights tracked on a blockchain. Tokens can represent ownership in a project, a right to use a service, a share of future revenue, or simply a tradable asset with no underlying claim.Like a physical poker chip a casino issues. The chip itself has no value. What makes it worth something is what it lets you do at the casino, what the casino has promised, and how much other people will pay you for it.Read more → down 95% from all-time high.

Ocean: Best tech, governance crisis

Ocean Protocol pioneered Compute-to-Data: algorithms travel to data, not vice versa. Perfect for healthcare and finance where data cannot leave premises. Seven years of development. Ocean Review

But the Foundation has stated the token has “no intended utility value.” Governance was dismantled. Active litigation with Fetch.ai over a $120M dispute. 81% of supply converted to ASI. The tech is mature. The organisation is not.

Data sovereignty spectrum

Data Sovereignty Spectrum

MODEL: Can you run and modify your own?

Model layer sovereignty means three things: access to model weights, ability to fine-tune, and ability to run inference privately.

Open model landscape (2026)

Open Model Landscape (2026)

| Model | Parameters | VRAM (INT4) | Min Hardware |

|---|---|---|---|

| Llama 3.1 8B | 8B | 4-6 GB | RTX 3060/4060 |

| Llama 4 Scout | 109B | 55 GB | 1x H100 80GB |

| Qwen 3.5 72B | 72B | 36 GB | 1x H100 |

| DeepSeek V3.2 | 685B (37B active) | ~380 GB (Q4) | 8x H100 |

VRAM rules of thumb: FP16 needs parametersParametersThe internal numbers (weights and biases) inside a neural network that get adjusted during training. A 70-billion-parameter model has 70 billion adjustable internal numbers encoding everything it has learned.Like the synapses in a human brain. Each parameter is a tiny dial that gets nudged a little during training. With enough dials, the network can represent surprisingly complex patterns. The total parameter count is roughly how much "brain" the model has.Read more → x 2 (in GB). INT4 needs parameters x 0.5. Add 20-40% for KV cache in production.

The QLoRA revolution

Full fine-tuningFine-tuningThe process of taking a pre-trained model and training it further on a smaller, more specialised dataset to adapt it to a specific task, domain, or style. Fine-tuning is much cheaper than training from scratch.Like hiring an experienced general practitioner doctor and giving them six months of focused training in a sub-speciality. You don't have to teach them medicine from scratch. You just narrow their expertise to the area you actually need.Read more → of a 70B model in FP32 requires roughly 1,120 GB of VRAM (weights + gradients + Adam optimizer states at 16 bytes per parameter). Mixed-precision (BF16) brings this down to around 672 GB. Either way, it means a multi-GPU cluster and costs in the range of $240-360 per training run on cloud GPUs.

QLoRA changed this. 70B models trainable on a single GPU with ~46 GB VRAM. 10-30x cheaper than full fine-tuning. 99% fewer trainable parameters. Cost depends heavily on hardware: $10-24 per run is achievable on Akash A6000s or budget cloud GPUs, though higher-end hardware or longer training runs will cost more.

Fine-Tuning Cost per Run (70B Model)

Model sovereignty assessment

Model Sovereignty Assessment

| Capability | Open Weights | API Only |

|---|---|---|

| Run offline | Yes | No |

| Fine-tune | Yes | Limited/None |

| Modify architecture | Yes | No |

| No usage tracking | Yes | No |

| No rate limits | Yes | No |

| Full control | Yes | No |

Open weightsOpen WeightsAn AI model whose trained parameters are publicly published and downloadable, so anyone can run, fine-tune, or modify it without permission. Llama, Qwen, DeepSeek, Mistral, and Hermes are open-weight models.Like the difference between a published recipe and a restaurant's secret formula. Anyone with the recipe can cook the dish at home, modify it, or open their own restaurant. The secret formula stays locked in someone else's kitchen.Read more → don’t guarantee sovereignty. You still need compute to run them. But they’re a prerequisite.

INFERENCE: Who sees your prompts?

Inference sovereignty means querying AI models without exposing inputs to third parties. This is the most critical privacy layer for sensitive applications.

Privacy technology comparison

Privacy Technology Comparison

| Technology | Overhead | Trust Model | Maturity |

|---|---|---|---|

| TEE | Near-native | Trust Intel/AMD | Production |

| FHE | 100-1,000x | Cryptographic | 2027-28 |

| MPC | Network-bound | Honest majority | Production |

TEE tradeoffs: Near-native speed. Sub-second latency possible. But you trust Intel/AMD/ARM hardware. Side-channel attacks are possible. No quantum resistance. For a deeper look at TEE, FHEFHEFully Homomorphic Encryption. A cryptographic technique that lets you compute on encrypted data without decrypting it. The result is also encrypted, and only the data owner can read it. FHE is the strongest form of computational privacy.Like sending a sealed box of ingredients to a chef, having the chef cook a meal inside the box without ever opening it, and getting back a sealed box with the finished meal. Only you can unseal it and see what's inside.Read more → and MPCMPCMulti-Party Computation. A cryptographic technique where multiple parties jointly compute a function over their inputs without any party revealing its input to the others. Useful for shared computation on private data.Like a group of friends calculating the average of their salaries without anyone revealing their actual salary to the others. Each person contributes a piece of the answer, and the pieces combine into the result, but nobody learns anything except the average itself.Read more → tradeoffs, see private AI inference. BlockEden Privacy Analysis

FHE tradeoffs: Mathematical security. Quantum-safe. Computation on encrypted data. But 100-1,000x overhead. Mainstream throughput not expected until 2027-28.

Venice: Privacy-as-feature

Venice reports 1.3 million registered users processing 45 billion tokens daily. No server-side storage of prompts. Local browser storage for history. Strips identifying info before GPU forwarding. Uncensored models. Venice Analysis

But: GPU providers see plaintext prompts. Centralised, closed-source proxy. No independent privacy audit. Privacy is a business decision, not architectural. Freedom Score: 57/100.

Phala: TEE-based confidential computing

Phala Network uses Trusted Execution Environments (Intel TDX, NVIDIA H100/H200/B200). Remote attestationAttestationA cryptographic proof that a piece of code is running on a specific hardware enclave in an unmodified state. Attestation lets remote users verify that a service is genuinely running what it claims to be running.Like a tamper-evident seal on a medicine bottle. The seal itself doesn't make the medicine safe, but it gives you a way to verify that nobody opened the bottle and swapped the contents before you bought it.Read more → proves computation integrity. SOC 2 Type I + HIPAA compliance. The only DeAI TEE cloud with both certifications. Phala Review

What works: Hardware-enforced privacy. Remote attestation. Compliance certifications. ElizaOS V2 integration.

What doesn’t: Only 398 paid users. L2L2Layer 2. A blockchain that runs on top of an L1 to provide cheaper or faster transactions while inheriting the L1's security. L2s batch many transactions and post compressed proofs back to the L1.Like an express lane built on top of a busy motorway. The express lane handles its own traffic at high speed, but it still feeds back into the main motorway and uses the motorway's bridges and tolls for security.Read more → is Stage 0 with centralised sequencer. 87.4% code contribution from single developer. GPU registration requires emailing the team.

Inference sovereignty spectrum

Inference Sovereignty Spectrum

COORDINATION: How do distributed participants align?

Without coordination, you have isolated compute nodes and siloed models. These protocols are the economic glue: they route work, score outputs, and distribute rewards across participants who have no reason to trust each other.

Bittensor: The largest DeAI network

Bittensor has 128+ active subnets, $100M+ exchange-reported daily volume (unfiltered; may include wash trading), and Chutes (a subnet handling serverless inference) processes billions of tokens daily. Real workloads, not hypothetical. Bittensor Analysis

The incentive model: Miners compete to produce AI outputs. Validators score them. The best miners earn the most TAO. Darwinian selection applied to model inference and training.

The stake-weight problem: Stake weight appears to correlate more strongly with rewards than output quality. Wealth determines TAO earnings more than actual AI quality. This is a fundamental misalignment.

Sovereignty tradeoffs: Local execution. Self-custodial wallets. No platform surveillance. But PoA block productionBlockA batch of transactions added to a blockchain at a set interval. Each block cryptographically links to the previous one, creating an append-only chain that can't be rewritten without redoing all the work since.Like a page in a ledger. Every page has a fixed number of entries, every page references the previous page, and once a page is filled and signed off it can't be edited without visibly invalidating every page that came after. The chain is just a very long series of these sealed pages.Read more → is centralised. Triumvirate governance (3 OTF employees). Gini coefficient ~0.98 (extreme early miningProof of WorkThe original blockchain consensus mechanism where miners compete to solve computationally expensive puzzles. The winner proposes the next block and earns the rewards. Proof of Work secures Bitcoin and most pre-2020 chains.Like a lottery that runs every 10 minutes where the tickets cost electricity. Whoever spends the most electricity buying lottery tickets has the best chance of winning that round's prize. Nobody can fake the result because the proof of their work is verifiable by everyone.Read more → concentration). Zero external revenue, entirely emission-dependent.

Morpheus: The fair launch

Morpheus is the only genuine fair launchFair LaunchA token launch where everyone has the same access from day one. No private sale, no insider allocation, no VC discount. Tokens are distributed by mining, staking, or open public sale at a single price.Like a 100m sprint where everyone starts behind the same line at the same time. Some runners are faster, but nobody gets to start 10 metres ahead because they paid extra. The race is decided by the run, not by who bought the best position.Read more → in DeAI. Every MOR earned through contribution. No premine. No VCVCVenture Capital. Private investors who fund projects at an early stage in exchange for equity or token allocations. VC rounds are typically pre-launch, at steep discounts to any future public price, with multi-year vesting.Like angel investors in a startup who buy shares before the company goes public. They take more risk because the company might fail, so they get a better price. Once the company IPOs they can sell, and the public market pays whatever price it thinks is fair.Read more → allocation. Morpheus Review For the mechanics of how MOR emission and stakingStakingLocking up a cryptocurrency to help secure a blockchain network, usually in exchange for rewards. The locked tokens act as a security deposit that can be taken away if the staker misbehaves.Like putting down a large rental deposit for an apartment. You get the money back if you behave, you earn interest while it's locked, and the landlord takes it if you trash the place.Read more → work, see how MOR actually works. If you want to run a node, see the Morpheus Lumerin node setup guide.

Morpheus Contribution Model

| Contributor Type | What They Provide | How They Earn MOR |

|---|---|---|

| Compute | GPU inference | Proving workload |

| Code | Smart contracts, agents | GitHub contribution scoring |

| Capital | stETH deposits | Funding protocol development |

| Community | Onboarding, docs | Through protection fund |

What works: Fair launch changes everything. The protocol cannot be rug-pulled by early insiders. Local agent execution. No platform surveillance. 16-year emission decay mimics Bitcoin.

What doesn’t: Thin liquidity (roughly $30K daily volume means 5-10% slippageSlippageThe difference between the expected price of a trade and the price you actually get when the trade executes. Slippage usually goes against the trader and gets worse with bigger trades or thinner markets.Like trying to buy 1000 bananas at the corner shop. The first few are at the marked price, but by the time you've bought them all you've moved the price up because there are no more bananas left at the original level. The shop has to restock at higher cost.Read more →). Minimal external revenue. Small provider count. Agent capabilities are early-stage. 90-day lock on earned MOR prevents exit.

Coordination sovereignty spectrum

Coordination Sovereignty Spectrum

What does sovereignty actually cost?

The break-even between APIAPIApplication Programming Interface. A structured way for one piece of software to talk to another. In DeAI, APIs let applications request inference from a model without running the model themselves.Like a waiter in a restaurant. You don't walk into the kitchen and cook your own meal. You tell the waiter what you want, they tell the kitchen, the kitchen cooks it, and the waiter brings it back. The API is the waiter.Read more → and self-hosted depends on which API you’re comparing against and how much you use. API pricing has dropped significantly since 2024. The table below uses Claude Sonnet 4.6 ($3/$15 per MTok, blended at roughly $9/MTok) as the mid-tier reference, and a single Akash A100 80GB (roughly $577/month) running a 70B quantised model at around 40 tok/s (3.5M tokens/day capacity) for self-hosted.

API vs Self-Hosted Cost (March 2026)

| Daily Tokens | Sonnet 4.6 API | Self-Hosted (Akash A100) | Winner |

|---|---|---|---|

| 500K | ~$4.50/day | 1 GPU: ~$19/day ($577/mo) | API |

| 2M | ~$18/day | 1 GPU: ~$19/day ($577/mo) | Roughly even |

| 10M | ~$90/day | 3 GPUs: ~$57/day ($1,731/mo) | Self-hosted |

| 50M | ~$450/day | 15 GPUs: ~$289/day ($8,655/mo) | Self-hosted |

Against cheaper APIs (DeepSeek V3.2 at $0.35/MTok blended, or Venice’s Qwen 3 235B at $0.45/MTok), the break-even shifts much higher. Against premium APIs (Claude Opus 4.6 at $15/MTok blended), it shifts lower. The model and provider you’re replacing determines the economics.

Three sovereignty stacks

Entry-level (Individual, 7B model): Consumer GPU you own. Llama 3.1 8B INT4. Local inference with Ollama. No privacy tech. Cost: $0-200/month (electricity and hardware you already own). Sovereignty: HIGH. Limitations: Model size, no coordination.

Entry-Level Sovereignty Stack

Professional (Small team, 70B model): Akash H100 spot (roughly $1,088/month). Qwen 3.5 72B INT4. Phala Cloud for TEE inference. Vana DataDAO participation. Bittensor subnet coordination. Cost: $2,000-4,000/month combined (compute + TEE + data layer fees). Sovereignty: MEDIUM-HIGH.

Professional Sovereignty Stack

Enterprise (400B+ model): Multi-provider Akash cluster (10+ H100s at roughly $1.49/hr each). Fine-tuned 70B+ model. Ocean C2D or Sahara for data. Phala Enterprise for inference. Custom subnet. Cost: $40,000-100,000/month depending on GPU count, redundancy, and support requirements. Sovereignty: MEDIUM.

The hybrid approach

You don’t need full sovereignty to see benefits. Hybrid approaches work. Route non-sensitive queries to cheaper APIs (DeepSeek V3.2 at $0.35/MTok is hard to beat on cost). Keep sensitive workloads on infrastructure you control. The sovereignty premium is real, but you only pay it for the workloads that need it.

What’s ready today?

Production-ready now (2026)

Production-Ready Now (2026)

| Layer | Protocol | Confidence |

|---|---|---|

| Compute | Akash | High (2020 mainnet, $3.15M revenue) |

| Compute | Render | High (enterprise customers) |

| Inference | Venice | High (1.3M users, 45B tokens/day) |

| Inference | Phala | Medium-High (TEE, SOC2/HIPAA) |

| Data | Grass | High (scale proven, sovereignty concerns) |

| Coordination | Bittensor | High (largest DeAI, real workloads) |

| Model | Llama/Qwen/DeepSeek | High (open weights, active dev) |

Emerging (6-12 months)

Emerging (6-12 Months)

| Layer | Protocol | Timeline |

|---|---|---|

| Compute | Gensyn | TGE April 2026, mainnet TBD |

| Data | Vana | Mainnet live, decentralisation roadmap pending |

| Data | Sahara | Sahara Chain in development |

| Coordination | Morpheus | Functional but thin liquidity |

Experimental

Experimental

| Layer | Protocol | Status |

|---|---|---|

| Inference | FHE-based | 100-1000x overhead, mainstream 2027-28 |

| Inference | MPC-based | Network-bound, honest majority required |

| Data | Ocean | Governance crisis, litigation |

Seven takeaways

1. Sovereignty is a spectrum, not binary. No protocol achieves perfect sovereignty. Every choice involves tradeoffs. Akash is cheap and decentralised but has a small provider base. Vana offers user-controlled data but requires collective action. Phala provides TEE privacy but trusts Intel/AMD hardware. Morpheus has a fair launch but thin liquidity.

2. Compute is the most production-ready layer. Akash (2020 mainnet, $3.15M annual revenue), Render (enterprise customers), io.net (growing revenue, $5.7M in Q1 2025): all have real workloads. Least experimental layer by some distance.

3. Data sovereignty is the hardest problem. Grass users contribute bandwidth, not data ownership. Vana offers genuine sovereignty but requires collective action to work. Ocean has the best tech (Compute-to-Data) but its governance has collapsed. Most builders will end up using synthetic data or open datasets and calling it a day.

4. Inference privacy requires tradeoffs. TEE (Phala) is fastest but trusts hardware vendors. FHE is mathematically secure but carries 100-1,000x overhead. Local inference gives you perfect privacy and limits you to whatever fits on your GPU.

5. Against mid-tier APIs (Sonnet-class at roughly $9/MTok), about 2 million tokens per day is where self-hosting breaks even. Against budget APIs like DeepSeek ($0.35/MTok), the threshold is much higher. The model you’re replacing determines the economics.

6. Fair launch matters more than most people think. Morpheus is the only protocol with zero insider allocation. The protocol cannot be rug-pulled by early insiders, because there are none. That changes the calculus significantly.

7. QLoRA. 70B models trainable on a single GPU with ~46 GB VRAM. $10-24 per run on budget hardware versus $240-360 for full fine-tuning. If you’ve been putting off fine-tuning because of cost, you’re working from outdated assumptions.

The honest assessment

AI sovereignty is achievable today. You can run your own models, on your own compute, with your own data, using privacy-preserving inference. The infrastructure exists.

But it requires informed tradeoffs. Sovereignty costs time, money, and expertise. Protocol risk is real: governance failures, technical vulnerabilities, market dynamics that shift incentives. The most sovereign stack is not always the most practical.

Start with the question: what are you actually trying to protect? Sensitive financial data needs the full stack. Casual experimentation needs none of it. Match your stack to your threat model.

The technology is not the constraint. Economics and operational complexity are. Sovereignty is now a choice, not a limitation.

The dual-score framework (Freedom Score + Returns Score) is my attempt to bring analytical rigour to a space full of marketing claims. See Freedom Score Methodology and Returns Score Methodology. Current token holdings are disclosed on our disclaimer page.