Intelligent Internet

A planned sovereign AI protocol with a three-layer blockchain (Bitcoin Core fork), dual-currency economy, and Proof-of-Benefit consensus. Founded by ex-Stability AI CEO Emad Mostaque. Pre-launch.

Strong open-source AI output (9 models, 20 datasets on HuggingFace) and real agent tooling, but no chain, no token, and execution depends on a controversial founder. Watch closely.

- + Genuine open-source AI output: 9 models, 20 datasets and II-Agent framework under Apache 2.0

- + II-Medical-8B runs locally via llama.cpp or Ollama; self-hostable with zero server dependency

- + Whitepaper Proof-of-Benefit design is the most intellectually ambitious document in the DeAI space

- − No blockchain, no token, no validators, no testnet timeline. The protocol layer is a PDF

- − Founder Emad Mostaque carries Stability AI baggage: fraud lawsuit, investor revolt, $153M losses in 2023

- − No disclosed funding; L42 Ltd has one director and ~18 people for an L0 blockchain project

Intelligent Internet scores 42/100 (D grade), reflecting a project with genuinely strong open-source contributions but no decentralised infrastructure or governance. The open-source transparency score (13/15) is among the highest in our portfolio: downloadable models, open datasets, published training methodologies, and Apache 2.0 licensing throughout. The censorship resistance and data sovereignty scores (8/15 and 7/15) reflect the genuine sovereignty value of self-hostable AI models that run locally without server dependencies.

Once you download II-Medical-8B, no one can take it away. However, governance (2/20) and infrastructure decentralisation (5/20) remain very low because no blockchain, no validators, no governance mechanisms, and no distributed network exist. The freedom score will need significant reassessment if and when the network launches.

As a pre-token project, II is being scored on what it delivers today: open-source AI tools and models that enable individual sovereignty, wrapped in a vision for decentralised infrastructure that has not yet been built.

Overall returns potential is weak at 23/100. Strongest dimension: supply dynamics (10/20). Weakest: revenue sustainability (0/25).

Not financial advice. Scores are opinions, not recommendations. Crypto is high-risk – you could lose everything you invest. Full disclaimer.

On this page

What it does

Intelligent Internet is an open-source AI project producing downloadable models, agent frameworks and trainingTrainingThe one-time process of teaching a neural network to perform a task by showing it massive amounts of example data and adjusting its internal weights until the outputs are good. Training builds the model; inference uses it.Like the years an apprentice spends learning a trade. You don't see any of the actual work, just thousands of repeated mistakes gradually becoming competence. By the end, the apprentice can do the job. The training was invisible, but the skill is now permanent.Read more → datasets, with a planned three-layer blockchain that hasn’t been built yet. The distinction matters. What exists today is actually useful. What’s promised requires a leap of faith.

Shipped deliverables include II-Agent, an open-source coding agent framework (3,172 GitHub stars, Apache 2.0). II-Medical, a family of clinical reasoning models fine-tuned on Qwen3 (8B and 32B parameter variants, downloadable from HuggingFace in GGUF format for local running). II-Search, a search-optimised 4B modelModelA trained neural network that takes inputs (text, images, audio) and produces outputs (more text, classifications, generated content). In DeAI the model is the thing that actually does the work.Like a very experienced apprentice who has spent years watching thousands of masters make furniture. They can't explain how they know when a joint is right, but they can make a chair that looks and functions like a Chippendale. The training is invisible. The output is what matters.Read more →. CommonGround, a multi-agent orchestration protocol. II-Commons, a self-hostable knowledge base. Twenty datasets published on HuggingFace, including II-Medical-Reasoning-SFT (2.2 million samples) and II-Thought-RL-v0 (342,000 reasoning examples). Nine models total. All Apache 2.0 licensed.

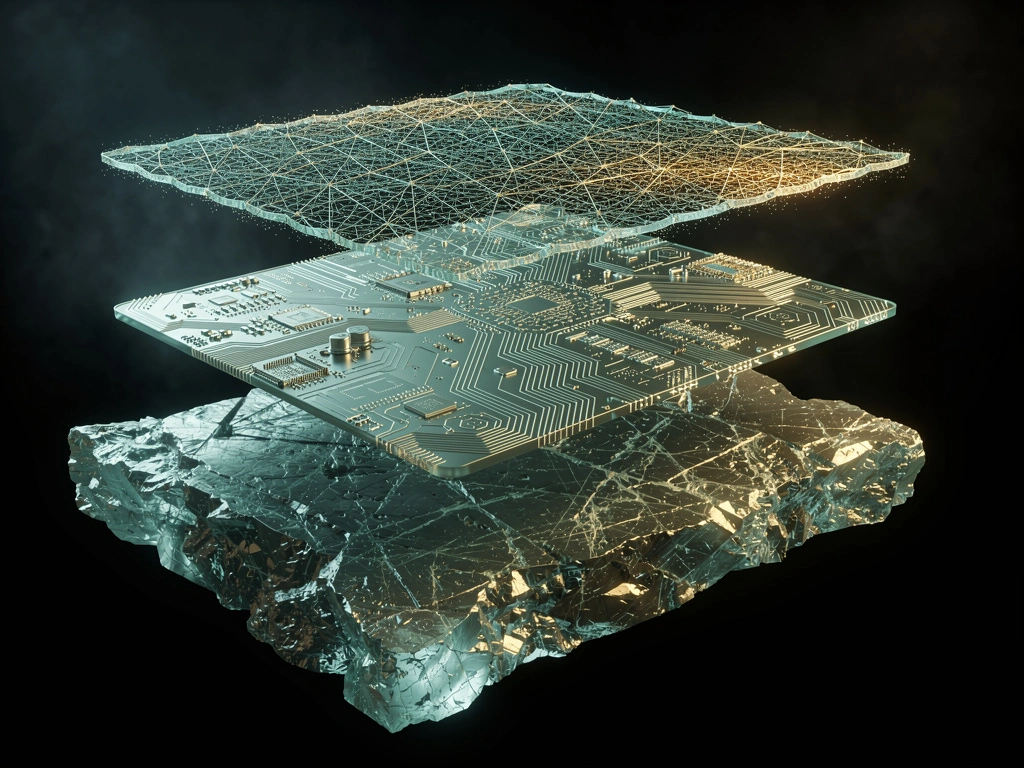

On the planned blockchain side: a three-layer architecture. Foundation Layer (L0), a forkForkA point at which a blockchain or its software splits into two separate paths. Forks can be temporary (two valid blocks compete and one wins), planned (a software upgrade), or contentious (a community split into two chains).Like a road that splits in two. Sometimes the split is just a temporary detour and traffic merges back. Sometimes it's a permanent fork where different drivers go different ways and never meet again.Read more → of Bitcoin Core v25 with BFT consensus replacing Proof-of-Work. Culture Layer (L1L1Layer 1. A base blockchain that runs its own consensus mechanism, executes transactions, and settles its own state. Bitcoin, Ethereum, NEAR, and Solana are all L1s. Anything built on top of an L1 is technically a Layer 2 or higher.Like the foundation of a building. Nothing else can exist on top until the foundation is solid. Different L1s make different tradeoffs for what kind of building they can support.Read more →), sovereign roll-ups operated by “National Champions” settling in local Culture Credits. Personal Layer (L2L2Layer 2. A blockchain that runs on top of an L1 to provide cheaper or faster transactions while inheriting the L1's security. L2s batch many transactions and post compressed proofs back to the L1.Like an express lane built on top of a busy motorway. The express lane handles its own traffic at high speed, but it still feeds back into the main motorway and uses the motorway's bridges and tolls for security.Read more →), running on user devices where AI agents execute workflows locally. Dual-currency economy with Foundation Coins (FC, 21 million hard cap mirroring Bitcoin) and Culture Credits (CC, elastic national currencies reserve-backed by FC). Proof-of-Benefit consensus ties every minted coin to verified useful AI work. None of this exists beyond a whitepaper published in July 2025.

Founded by Emad Mostaque through L42 Ltd, a private limited company incorporated in England and Wales in April 2024 (Companies House number 15644961). Mostaque is the sole director. He previously co-founded Stability AI, which released Stable Diffusion (downloaded 100 million-plus times), raised $174 million achieving a $1 billion-plus valuation, and then imploded. He resigned as CEO in March 2024 under investor pressure.

Estimated headcount is 18 people (PitchBook), with core MLMLMachine Learning. The branch of AI where systems learn patterns from data instead of being explicitly programmed with rules. Modern AI (LLMs, image generation, recommendation systems) is almost entirely machine learning.Like teaching a child to recognise dogs by showing them thousands of pictures of dogs, instead of writing down a precise rulebook for what makes a dog. The child learns the pattern from examples rather than from instructions.Read more → development handled by a Vietnam-based team of 6-8 engineers. No funding has been publicly disclosed. The project may be self-funded from Mostaque’s personal wealth.

Value proposition

Real AI output

9 models and 20 datasets on HuggingFace. II-Agent framework with 3,172 GitHub stars, all Apache 2.0.

Whitepaper blockchain

Three-layer L0/L1/L2 architecture with Proof-of-Benefit consensus and dual FC/CC currency.

Founder risk

Emad Mostaque's Stability AI history: fraud lawsuit, investor revolt, $153M projected losses in 2023.

The value proposition splits cleanly into what exists today and what’s promised.

What exists is substantive for a pre-token project. According to Intelligent Internet’s published model cards, II-Medical-8B achieves 70.49% average across 10 medical benchmarks, performance comparable to models four to nine times its size. The updated version (II-Medical-8B-1706) scores 46.8% on HealthBench, comparable to Google’s MedGemma-27B. These models run locally on consumer hardware via llama.cpp, Ollama or LM Studio. Once downloaded, no server dependency, no APIAPIApplication Programming Interface. A structured way for one piece of software to talk to another. In DeAI, APIs let applications request inference from a model without running the model themselves.Like a waiter in a restaurant. You don't walk into the kitchen and cook your own meal. You tell the waiter what you want, they tell the kitchen, the kitchen cooks it, and the waiter brings it back. The API is the waiter.Read more → key, no one can take them away. For the sovereignty thesis, this is the purest form of “own your mind”: a clinical reasoning model running on your own machine.

According to Intelligent Internet’s benchmarks, II-Agent scores 61.8% on Terminal Bench 2, above Claude Code at 58.0%. II-Search-4B hits 91.8% on SimpleQA (versus the base Qwen3-4B at 76.8%). These are self-reported results; the models are publicly downloadable and testable. The agent runs. You can verify this yourself.

Training datasets are a concrete public good. II-Thought-RL-v0 is the first large-scale multi-task RLRLReinforcement Learning. A training paradigm where an AI agent takes actions in an environment, receives rewards or penalties for the outcomes, and learns a policy that maximises long-term reward. Used heavily for aligning modern LLMs.Like training a dog with treats. Good behaviour gets a treat. Bad behaviour gets nothing or a reprimand. Over many repetitions the dog learns which behaviours produce treats and starts doing them on purpose.Read more → dataset. II-Medical-Reasoning-SFT’s 2.2 million samples enable anyone to train their own medical reasoning models. This contribution to the open-source AI commons is real and lasting.

What’s promised is the most ambitious vision in the DeAIDeAIDecentralised AI. An umbrella term for blockchain-based projects that build AI infrastructure (compute, data, inference, models, agents) without a single central provider controlling the system.Like the difference between streaming a movie from Netflix and sharing it via BitTorrent. Netflix is fast and polished but one company controls what you can watch and what you pay. BitTorrent is messier but no single operator can shut you out.Read more → space. A Bitcoin-inspired blockchain where every minted coin proves useful AI work was performed. Sovereign roll-ups operated by national-level validators. A Living Constitution Assembly with sortition-selected jurors. An Oracle Council of 15 AI agents governing protocol parametersParametersThe internal numbers (weights and biases) inside a neural network that get adjusted during training. A 70-billion-parameter model has 70 billion adjustable internal numbers encoding everything it has learned.Like the synapses in a human brain. Each parameter is a tiny dial that gets nudged a little during training. With enough dials, the network can represent surprisingly complex patterns. The total parameter count is roughly how much "brain" the model has.Read more →. The whitepaper is technically detailed and intellectually coherent. It’s also entirely theoretical.

A counter-narrative exists. Mostaque has form for vision that outruns execution. Stability AI had a spectacular vision too. The pattern of ambitious promises, rapid early progress, and eventual governance and financial challenges is well documented. Whether that pattern repeats is the central question.

Tokenomics

No tokenTokenA digital unit of value or access rights tracked on a blockchain. Tokens can represent ownership in a project, a right to use a service, a share of future revenue, or simply a tradable asset with no underlying claim.Like a physical poker chip a casino issues. The chip itself has no value. What makes it worth something is what it lets you do at the casino, what the casino has promised, and how much other people will pay you for it.Read more → exists. No chain exists. Everything below describes whitepaper design, not operational reality.

Foundation Coins (FC) mirror Bitcoin’s supply model: 21 million hard cap, initial blockBlockA batch of transactions added to a blockchain at a set interval. Each block cryptographically links to the previous one, creating an append-only chain that can't be rewritten without redoing all the work since.Like a page in a ledger. Every page has a fixed number of entries, every page references the previous page, and once a page is filled and signed off it can't be edited without visibly invalidating every page that came after. The chain is just a very long series of these sealed pages.Read more → subsidy of 50 FC, halvingHalvingA protocol event that cuts the rate of new token emissions by half. Halvings are scheduled in advance, happen automatically at fixed intervals, and are a core mechanism for enforcing declining token supply growth over time.Like a savings account where the interest rate is contractually cut in half every four years. You still earn interest, but the rate drops on a known schedule, and the issuer can't change it without breaking the contract.Read more → every 210,000 valid blocks (two epochs). Faster emission than Bitcoin, with roughly 99% circulated within 12 years. The critical difference: blocks are only valid when they contain a Proof-of-Benefit receipt proving useful AI work was performed. Four genesis benefit classes: compute-inference, compute-training, data-curation and agent-orchestration.

No pre-mine is described in the whitepaper. Coins are minted only through validated work. If implemented as described, this would be one of the fairest distribution methods in crypto. The design is structurally closer to Bitcoin miningProof of WorkThe original blockchain consensus mechanism where miners compete to solve computationally expensive puzzles. The winner proposes the next block and earns the rewards. Proof of Work secures Bitcoin and most pre-2020 chains.Like a lottery that runs every 10 minutes where the tickets cost electricity. Whoever spends the most electricity buying lottery tickets has the best chance of winning that round's prize. Nobody can fake the result because the proof of their work is verifiable by everyone.Read more → than to the ICOICOInitial Coin Offering. A token sale where a project sells tokens directly to the public, usually before any product exists. ICOs dominated 2017-2018 funding and are now mostly replaced by airdrops, IDOs, or fair launches.Like a company selling shares to the public before going public, except with no SEC oversight, no audited financials, and often no product at all. The 2017 ICO boom showed why those guardrails exist in traditional finance.Read more →, VC-backed or insider-heavy models that dominate DeAI. A Treasury receives a programmable share of block rewardsEmissionsNew tokens created and distributed by a blockchain protocol over time as rewards to validators, stakers, or miners. Emissions fund network security and participation at the cost of diluting existing holders.Like a company that pays employees partly in newly printed shares. Every year the total number of shares goes up, which means existing shareholders own a slightly smaller slice of the same company unless the company grows faster than the printing.Read more → (percentage TBD), which is the potential centralisation vector.

Culture Credits (CC) are the second currency: elastic, reserve-backed by FC, and issued by National Champions for local economic activity. Each sovereign roll-up operates its own CC with an on-chain AMMAMMAutomated Market Maker. A type of decentralised exchange that uses liquidity pools and a pricing formula to enable token trading without an order book. Anyone can deposit tokens into the pool and earn fees from trades.Like a vending machine that sets its own prices based on how much stock is left. As one type of token gets bought and depleted, the machine raises its price for that token automatically. As the other type accumulates, its price drops. No human operator needed.Read more → for CC-to-FC swaps. Reserve requirements ensure backing. This dual-currency model is novel and completely untested.

Key parameters remain undefined: exact stake amounts for National Champions, slashing percentages, Treasury burn-vs-grant splits, CC reserve requirements, evaluation thresholds for Proof-of-Benefit. The whitepaper honestly labels these as TBD rather than fabricating numbers, which is at least transparent.

Warning: an unofficial Solana token using the ticker “II” exists on CoinSwitch with zero liquidityLiquidityHow easily a token can be bought or sold without moving the price. High liquidity means you can enter or exit large positions quickly at the quoted price. Low liquidity means even small trades can swing the market.Like the difference between selling a house and selling a share of Apple stock. The house might be worth more on paper, but finding a buyer at that price takes weeks. The Apple share converts to cash in one click.Read more →. This is not endorsed by the project. No official token has been listed on CoinGecko or CoinMarketCap. Do not buy it.

How to participate

Use II-Agent. The most accessible entry point. Available as a web app (agent.ii.inc) or self-hosted via Docker and CLI from GitHub. Supports multiple LLMLLMLarge Language Model. A neural network trained on vast amounts of text to predict the next word in a sequence. Modern LLMs (GPT, Claude, Llama, Qwen, DeepSeek) generate human-quality text and are the foundation of most modern AI products.Like an autocomplete that read every book ever written. It has no memory of individual texts but it has absorbed the patterns of language so deeply that it can generate paragraphs that sound human. The skill is statistical, not conscious.Read more → backends (Gemini 3, Claude Sonnet 4.5, GPT-5). Free. Apache 2.0 licensed. Self-hosting requires API keys for your chosen LLM provider.

Download and run models locally. II-Medical-8B in GGUF format runs on any machine with 8GB-plus RAM via llama.cpp or Ollama. II-Search-4B is even lighter. MLX format available for Apple Silicon. This is the highest-sovereignty participation mode: local inferenceInferenceRunning a trained AI model to produce an answer. Inference is what happens when you type a prompt into ChatGPT and get a response. The model takes your input, computes a best guess, and returns it.Like asking an expert for their opinion. The training was the decades they spent becoming an expert. The inference is the 30 seconds it takes them to answer your specific question.Read more → with zero server dependency. Not intended for clinical use.

Use the datasets. Train or fine-tune your own models using the 20 published datasets. II-Thought-RL-v0 (342,000 reasoning examples) and II-Medical-Reasoning-SFT (2.2 million samples) are particularly valuable for anyone building AI training pipelines. Requires GPUGPUGraphics Processing Unit. Originally designed to render video game graphics, GPUs turned out to be exceptionally good at the massively parallel math that AI models need. Modern AI training and inference runs almost entirely on GPUs.Like a factory with 10,000 workers doing the same simple task in parallel, versus a CPU which is more like 10 workers each doing different complex tasks. AI training involves doing simple math a million times per second on a million numbers, which is exactly what the GPU factory is designed for.Read more → infrastructure and ML expertise.

Contribute to open-source repos. Fourteen original repositories under Apache 2.0. Primary projects: II-Agent (coding agents), II-Researcher (search and research), CommonGround (multi-agent orchestration), II-Commons (knowledge base), II-Thought (RL dataset tooling). Python and TypeScript skills required.

Validate (planned, not yet available). The whitepaper describes National Champions: validators requiring economic FC stake (amount TBD), hardware attestationAttestationA cryptographic proof that a piece of code is running on a specific hardware enclave in an unmodified state. Attestation lets remote users verify that a service is genuinely running what it claims to be running.Like a tamper-evident seal on a medicine bottle. The seal itself doesn't make the medicine safe, but it gives you a way to verify that nobody opened the bottle and swapped the contents before you bought it.Read more →, symmetric 10 Gbit/s bandwidth on three independent physical paths, sub-300ms interlink latency and signed heartbeats every second. Twelve Champions at genesis, long-term target of one or more per sovereign nation. No selection process has been described and no timeline published.

Honest assessment

What works

The open-source output is real and competitive. Nine models. Twenty datasets. A functional agent framework that Intelligent Internet reports benchmarks above Claude Code on Terminal Bench 2. II-Medical-8B punching above its weight at 8B parameters is technically impressive. All downloadable, all self-hostable, all Apache 2.0. The training methodologies (three-stage medical RL pipeline, four-phase search training with Code-Integrated Reasoning, DAPO safety RL) are documented and reproducible. Third-party GGUF quantisations exist (DevQuasar), confirming community adoption. The whitepaper, whatever you think of its feasibility, is the most technically detailed and intellectually ambitious design document in the DeAI space. The Proof-of-Benefit mechanism tying currency minting to verified useful AI work is a credible conceptual innovation.

What doesn’t work

There’s no chain. No token. No validators. No governance. No testnet. No mainnet timeline. The project is a small company (L42 Ltd, sole director: Mostaque) with an impressive whitepaper and demonstrably useful AI tools. It isn’t a protocol. The gap between the vision (sovereign AI infrastructure for every nation) and the current reality (roughly 18 people, no blockchain code, no disclosed funding) is vast.

Eight of the 22 GitHub repositories are forks of other projects (OpenAI Codex, Google Gemini CLI, litellm, verl and others), which inflates apparent scope. All models are fine-tunes of Alibaba’s Qwen3 family, not foundation models trained from scratch. The ML contribution is in training methodology, not base model capability. If Qwen3’s licence or availability changes, the model pipeline is affected.

“National Champions” as a concept, one validatorValidatorA computer that runs the full blockchain protocol, verifies transactions, and proposes new blocks. Validators are the workers that keep a Proof of Stake network running, and they earn rewards for doing the work correctly.Like a notary public who witnesses and stamps legal documents. Validators witness transactions, check they follow the rules, and stamp them into the permanent record. A notary who commits fraud loses their license. Validators work the same way, except the license is staked tokens that get slashed on misbehaviour.Read more → per sovereign nation, 12 at genesis with extreme hardware requirements, has no identified participants, no selection process and no operational infrastructure. The Living Constitution Assembly, Guardian Lattice, Oracle Council and progressive decentralisation roadmap exist only as whitepaper text.

The risk

Emad Mostaque is an extreme concentration of both opportunity and risk. The opportunity: he proved with Stable Diffusion that open-source AI can compete with proprietary alternatives. His 290,000 X followers, AI industry profile and policy engagement (FII9 alongside Peter Diamandis) provide distribution that most DeAI projects cannot match.

His Stability AI track record includes a co-founder fraud lawsuit (Cyrus Hodes, July 2023), a Forbes investigation finding misleading claims about education credentials and institutional partnerships (UNESCO, OECD, WHO and World Bank all denied partnership claims), investor revolt from Lightspeed Venture Partners, and $153 million projected losses against $11 million income in 2023. The question is not whether these things happened. They did. The question is whether the pattern repeats.

No funding has been disclosed for Intelligent Internet. Building a novel L0 blockchain with BFT consensus, 12-plus validators, dual-currency AMMs and a Guardian Lattice requires significant capital. The custom Bitcoin Core fork approach means building everything from scratch: wallets, explorers, bridges, DeFiDeFiDecentralised Finance. Financial services like lending, trading, and yield farming built on smart contracts instead of traditional banks or brokerages. DeFi protocols are usually permissionless and global.Like a vending machine that can give you a loan, swap your currencies, or invest your savings. Nobody is behind the counter, the rules are written into the machine itself, and anyone with money in the right format can use it.Read more → integrations, developer tooling. Most DeAI projects build on EVM, Cosmos or Solana where ecosystems already exist. This choice is either principled or impractical. Possibly both.

A further complication: the FII Institute connection (Future Investment Initiative, run by Saudi Arabia’s Public Investment Fund) adds geopolitical complexity. Sovereign AI infrastructure affiliated with a sovereign wealth fund raises questions about the “sovereignty” framing that haven’t been addressed.

My position

I don’t hold any Intelligent Internet tokens because no token exists. I’ve downloaded and tested II-Medical-8B locally. The models work. The agent framework works. Whether the blockchain vision materialises is a separate question from whether the open-source AI output is useful, and on the latter, the answer is yes.

Freedom Score: 42/100

Intelligent Internet scores 42/100 (D grade). Full methodology at Freedom Score Methodology.

Infrastructure decentralisation (5/20): No decentralised infrastructure exists. No blockchain, no validators, no consensus mechanism. However, the self-hostable models (II-Medical-8B GGUF, II-Search-4B, II-Thought-1.5B) and self-hostable tools (II-Agent via Docker, II-Commons with PostgreSQL) provide functional infrastructure decentralisation at the model layer. Once downloaded, these run entirely on user hardware with zero dependency on Intelligent Internet’s servers. The planned blockchain (Bitcoin Core fork, 12 National Champions, BFT consensus) is ambitious but entirely theoretical.

Governance decentralisation (2/20): All decisions made by Mostaque as sole director of L42 Ltd. No governance mechanism exists. No token voting, no DAODAODecentralised Autonomous Organisation. A way to coordinate decisions and manage a treasury using token-weighted voting instead of a traditional company structure. Token holders propose and vote on changes directly.Like a shareholder-run company where every shareholder can vote on every decision, the votes are public, and the company can't do anything the shareholders don't approve. The coordination is messier than a normal company but nobody has unilateral control.Read more →, no community decision-making. The whitepaper describes progressive decentralisation (multi-sig to Oracle Council to Living Constitution Assembly) but none of it is implemented. Apache 2.0 licensing provides a form of soft governance: anyone can fork any repo without permission. Meaningful, but it’s not governance.

Token distribution fairness (7/15): No token launched. The whitepaper design describes Proof-of-Benefit mining with no pre-mine, mirroring Bitcoin. If implemented as described, coins are minted only through verified useful AI work. Structurally fairer than most crypto launches. However, the Treasury receives a programmable share of block rewards (percentage TBD), initial multi-sig control by “founding Champions” means insider control before decentralisation, and key distribution parameters are undefined. Scored generously for design intent with a significant discount for nothing being implemented.

Censorship resistance (8/15): The downloadable models provide meaningful censorship resistance today, independent of any blockchain. II-Medical-8B, II-Search-4B and II-Thought-1.5B are complete model weights, not API access. Once downloaded, no entity can revoke, restrict or censor them. Training datasets are published, enabling full reproducibility. II-Agent supports multiple LLM backends and can be self-hosted. However, the web app (agent.ii.inc) is a centralised service. No censorship-resistant network layer exists yet.

Data sovereignty (7/15): Self-hosted models run entirely on user hardware with no data leaving the device. II-Agent can be self-hosted via Docker. II-Commons deployable with local PostgreSQL. Training datasets openly published. The planned L2 Personal Layer describes privacy-preserving local enclaves. However, the hosted web app processes data through centralised servers. No zero-knowledge proofs implemented. No confidential computingConfidential ComputeHardware-enforced computation where data and code are encrypted in memory and only the authorised application can access them. The machine's operator cannot read what the application is doing even though they own the machine.Like renting space in a bank vault. The bank owns the building and runs the security, but what you put in the vault is invisible even to the bank staff. Only you have the key.Read more →.

Open source and transparency (13/15): The project’s strongest dimension. Fourteen original repositories under Apache 2.0. Nine models with full weights on HuggingFace. Twenty datasets published openly. Training methodologies documented and reproducible. GGUF and MLX formats for local running. Companies House registration public. Deductions: 8 of 22 repos are forks (inflating apparent activity), Mostaque’s documented history of misleading claims at Stability AI is a transparency concern, corporate financials not disclosed.

Path to improvement

Three changes would materially increase Intelligent Internet’s score:

- Launch a testnet. The single biggest signal that the blockchain vision is real rather than aspirational. A working testnet with Proof-of-Benefit consensus, even with a handful of validators, would demonstrate that the whitepaper design translates to operational code. Until then, the protocol layer is a PDF.

- Publish a funded roadmap with milestones. No mainnet timeline exists. No disclosed funding exists. A transparent roadmap with funded milestones and verifiable deliverable dates would address the most obvious gap between the ambition and the evidence. Construction projects don’t get built without a programme. Neither do blockchains.

- Identify the genesis National Champions. Twelve validators at genesis is a specific claim. Who are they? What hardware do they have? Where are they located? Publishing even a shortlist with hardware attestation would convert a whitepaper concept into a concrete commitment.

Returns Score: 23/100

FC scores 23/100 (F grade). Full methodology at Returns Score Methodology.

Token utility (8/20): No token exists. The whitepaper describes Foundation Coins as the medium for Proof-of-Benefit consensus, where every minted coin proves useful AI work was performed. Four genesis benefit classes are defined: compute-inference, compute-training, data-curation, and agent-orchestration. The design is intellectually coherent and more purposeful than most token utility models. But it is a design in a PDF, not a functioning token in a live economy. Scoring reflects the quality of the concept with a heavy discount because none of it has been built.

Value accrual (5/20): The value accrual model is entirely conceptual. FC would accrue value through scarcity (21M cap, halving schedule), utility demand (stakingStakingLocking up a cryptocurrency to help secure a blockchain network, usually in exchange for rewards. The locked tokens act as a security deposit that can be taken away if the staker misbehaves.Like putting down a large rental deposit for an apartment. You get the money back if you behave, you earn interest while it's locked, and the landlord takes it if you trash the place.Read more → for validation, Culture Credit reserve backing), and the Proof-of-Benefit requirement tying minting to useful work. On paper, this is one of the more thoughtful value accrual designs in the space. In practice, there is no blockchain, no consensus mechanism, no validators, and no working implementation of any of these mechanisms. The gap between whitepaper and reality is as wide as it gets.

Supply dynamics (10/20): The 21 million hard cap mirroring Bitcoin is the strongest dimension here. No pre-mine is described; coins are minted only through validated work. If implemented as designed, this would be structurally fairer than nearly every token launch in crypto. The halving schedule with 99% circulated within 12 years creates predictable scarcity. The Treasury receiving a programmable share of block rewards is the centralisation vector, with the percentage still undefined. Scored generously for scarcity model design, but the “if implemented” caveat carries significant weight when no code exists.

Revenue sustainability (0/25): Zero. No blockchain means no transaction fees. No token means no staking revenue. No marketplace means no trading fees. The AI models and tools are distributed for free under Apache 2.0. II-Medical, II-Agent, and II-Search generate no revenue for the project or potential token holders. This is commendable from an open-source perspective and disastrous from a returns perspective. There’s no revenue model, no path to revenue, and no disclosed funding to sustain operations until one materialises.

Liquidity and access (0/15): There is no token. No exchange listing, no DEXDEXDecentralised Exchange. A trading venue where token swaps happen entirely through smart contracts, with no central operator holding user funds. The largest DEXes are Uniswap, Aerodrome, Raydium, PancakeSwap, and Curve.Like a self-service vending machine that lets you swap one type of coin for another. The machine sets the exchange rate based on its current stock, anyone can deposit coins to refill it, and there's no clerk behind the counter.Read more → pool, no OTC market. The unofficial Solana token using the “II” ticker on CoinSwitch has zero liquidity and is not endorsed by the project. Without disclosed funding, the capital backing that typically signals future exchange listings is absent. A score of zero is the only honest assessment for a pre-token project with no tradeable asset of any kind.

Path to improvement

Three changes would materially increase Intelligent Internet’s returns score:

- Build the blockchain and launch a token. Every dimension of this score is hamstrung by the same fundamental problem: nothing exists. A working testnet with Proof-of-Benefit consensus and mineable FC tokens would transform every category from theoretical to assessable. Without a chain, there is nothing to score.

- Establish a revenue model for the AI tools. Free, open-source AI models are a public good but not a returns proposition. Introducing optional paid tiers, enterprise licensing, or on-chain inference fees that flow to token holders would create a revenue stream. The current model generates goodwill but zero economic value for potential FC holders.

- Secure and disclose funding. Building a novel L0 blockchain with BFT consensus, sovereign rollups, and a dual-currency AMM requires significant capital. Transparent disclosure of funding sources and runway would signal that the project can survive long enough to deliver on its whitepaper promises.

Team overview

British-Bangladeshi, born 17 April 1983 in Jordan. BA Mathematics and Computer Science from Oxford University (2005). Diagnosed with Asperger's and ADHD. Son's autism diagnosis (~2011) motivated pivot from finance to AI. 13 years in hedge fund management (Pictet, Capricorn Fund Managers as Co-CIO achieving 20% returns in 2017, ECSTRAT). Co-founded Stability AI (late 2020) with Cyrus Hodes. Stability AI released Stable Diffusion (Aug 2022), downloaded 100M+ times with 300,000+ developers/creators. Raised $174M+ achieving $1B+ valuation. Resigned as CEO March 2024 under investor pressure. Controversies: co-founder Cyrus Hodes filed fraud lawsuit (July 2023) alleging $100 stake purchase before $1B valuation round; Forbes investigation (June 2023) found misleading claims about education credentials, Amazon partnership, and NGO partnerships (UNESCO, OECD, WHO, World Bank denied partnerships); Lightspeed Venture Partners cited mismanagement (Oct 2023); Stability AI had $153M projected losses vs $11M income in 2023. Genuine achievements: Stable Diffusion demonstrably proved open-source AI could compete with proprietary alternatives; consistent open-source advocacy when most frontier labs went proprietary; active in AI governance and policy. Founded Intelligent Internet post-departure. Invested in Prime Intellect (Series A, March 2025). Based in UK/Panama.

https://x.com/EMostaqueSource: OYM Research · Last updated 2026-04-27

Technical snapshot

Three-layer blockchain architecture (whitepaper design, not yet implemented). Foundation Layer (L0): canonical blockchain ledger, fork of Bitcoin Core v25 with BFT consensus replacing PoW. Records UTXOs, FC issuance, Anchor-Set Merkle roots, and identity hashes. Single-round finality requiring signatures from >=2/3 of validator stake. Culture Layer (L1): sovereign roll-ups operated by National Champions, settling in local Culture Credits (CC) while enforcing data-residency rules. Personal Layer (L2): runs on user devices where II-Agents execute workflows and decide whether to call local models or external APIs. Currently, the L2 tooling and open-source AI outputs are the primary deliverables: II-Agent (open-source agent framework), II-Medical (clinical-grade fine-tuned LLMs downloadable from HuggingFace), II-Search (search-optimised LLMs), CommonGround (multi-agent orchestration), II-Researcher, II-Commons (knowledge base with PostgreSQL + VectorChord), II-Thought (RL training datasets), and SAGE (Sovereign AI Governance Engine, announced at FII9 but not yet shipped). No blockchain infrastructure has been deployed.

Commit Activity

Community

Source: OYM Research · Last updated 2026-04-27

Tokenomics deep dive

Token utility

- Foundation layer transaction fees (planned)

- Staking by National Champions to participate as validators (planned)

- Settlement currency between Culture Layer roll-ups (planned)

- Backing reserve for Culture Credits (planned)

- Governance participation (planned)

- Treasury funding via block reward share (planned)

Supply

| Max supply | Total supply | Circulating | Circ. % |

|---|---|---|---|

| 21,000,000 | 0 | 0 | 0% |

Method: Proof-of-Benefit mining. Coins minted only when validators attach a signed receipt proving useful compute was performed. Receipt requires: work_root (Merkle root of inputs/outputs/artefacts), benefit_class, eval_score meeting Oracle-set thresholds, producer_id, and timestamp. 21M hard cap mirrors Bitcoin. Initial subsidy 50 FC/block, halving every 210,000 blocks (two epochs). Faster emission than Bitcoin (~99% in 12 years vs Bitcoin's ~2140). Not yet launched.

| Category | % | Vesting | Cliff |

|---|---|---|---|

| Block rewards (Proof-of-Benefit mining) | -- | Halving every 2 epochs (105,000 blocks each). Initial block subsidy: 50 FC. ~99% circulated within 12 years post-launch. | None |

Emissions

The tokenomics design is ambitious and well-articulated in the whitepaper but entirely theoretical. No token exists. No chain exists. The 21M hard cap and halving schedule mirror Bitcoin, which is a deliberate design choice. The dual-currency model (FC as hard scarce reserve, CC as elastic national currencies reserve-backed by FC) is novel and untested. The Proof-of-Benefit mechanism ties every minted coin to verified useful AI work (inference, training, data curation, agent orchestration), which is a genuine innovation over Proof-of-Work's computational waste and Proof-of-Stake's capital-weighted consensus. Key parameters (stake amounts, slashing %, CC reserve floor, Treasury splits, evaluation thresholds) are all deferred to governance or future specification. The whitepaper honestly labels these as TBD rather than fabricating numbers. NOTE: An unofficial Solana token using the ticker 'II' exists on CoinSwitch (address DjwB2SSpLsXwrecQoPnHFn3ki8SbDknurSirxX3oBAGS) with zero liquidity and no trading. This is NOT the official project token. No token has been listed on CoinGecko or CoinMarketCap for Intelligent Internet.

Source: OYM Research · Last updated 2026-04-27

How to participate

Use II-Agent Chat, a free AI assistant supporting multiple LLM backends (Gemini 3, Claude Sonnet 4.5, GPT-5). Available as web app (agent.ii.inc) or self-hosted via Docker/CLI from GitHub (Apache 2.0). Terminal Bench 2 score: 61.8% (above Claude Code at 58.0%). Self-reported SWE-Bench Pro: 45.1%.

Download and run II-Medical models locally for private medical reasoning. Available in 8B, 32B sizes. GGUF format available for llama.cpp, Ollama, LM Studio. MLX format for Apple Silicon. vLLM and SGLang serving documented. Runs entirely on your own hardware with no server dependency. II-Medical-8B achieves 70.49% average across 10 medical benchmarks (comparable to 72B models) and 40% on HealthBench (comparable to OpenAI o1). NOT intended for clinical use.

Download and run II-Search models locally for private search and research. 4B parameter size. GGUF and MLX formats. 91.8% SimpleQA pass rate. Code-Integrated Reasoning variant achieves 72.2% on Frames benchmark.

Use II-Commons hosted beta (commons.ii.inc) for querying arXiv and PubMed knowledge bases. Free beta access. Or self-host using the open-source II-Commons repository with PostgreSQL + VectorChord.

Use training datasets (II-Thought-RL-v0, II-Medical-Reasoning-SFT, II-Search-SFT and others) to train or fine-tune your own models. 20 datasets published on HuggingFace. II-Thought-RL-v0 (342k examples) is the first large-scale multi-task RL dataset. II-Medical-Reasoning-SFT has 2.2M samples.

Contribute to open-source repositories. 14 original repos under the Intelligent-Internet GitHub organisation (Apache 2.0). Primary projects: II-Agent (agent framework), II-Researcher (search/research agents), CommonGround (multi-agent orchestration OS), II-Commons (knowledge base), ii-thought (RL dataset tooling).

Planned: become a National Champion validator. Requires economic FC stake (amount TBD), hardware attestation, symmetric >=10 Gbit/s bandwidth on 3 independent physical paths, <300ms interlink latency, signed heartbeats every second. 12 Champions at genesis, long-term target >=1 per sovereign nation. Admission requires stake + attestation + one-week public objection period + Sentinel majority approval. Not yet available.

Developer resources

Source: OYM Research · Last updated 2026-04-27

Community

Governance

Planned: three-phase progressive decentralisation. Phases 0-2: multi-sig controlled by founding Champions, stewards Treasury. Phase 3+: 15-seat Oracle Council (each seat is a Partner-grade II-Agent with Principal/Associate teams). Guardian Lattice enforces rules through three roles: Sentinels (read-only monitors replaying PoB receipts), Advisers (human-readable risk reports), Implementers (time-locked pause key for emergencies). Six-month automatic rotation via stake-weighted election. Living Constitution Assembly with domain experts and sortition-selected jurors. Parameter updates require on-chain proposals, 7-day Sentinel simulation, Oracle Council 2/3 approval, and 30-day grace period. Not yet implemented.

Sentiment

Community is small relative to the ambition of the project and Mostaque's personal following. Discord at 884 members is modest for a project led by a figure with 290k X followers. Most public attention flows through Mostaque's personal account rather than the project account. The open-source AI tooling (II-Agent at 3.2k stars) has attracted genuine developer interest. The philosophical framework (Symbioism, The Last Economy, Master Plan) generates intellectual engagement but the blockchain and tokenomics are not yet discussion topics since nothing is live. Podcast appearances on Cognitive Revolution, New Economies, TWiT.TV demonstrate Mostaque actively evangelises the vision.

Source: OYM Research · Last updated 2026-04-27